Overview

Apache Kafka is a powerful distributed streaming platform that is widely used to build real-time data pipelines and streaming applications. As the demand for processing large volumes of data in real-time increases, scaling a Kafka cluster becomes essential. In this guide, we’ll dive into the process of expanding a Kafka cluster step by step to accommodate growing data requirements, with practical examples to illustrate the concepts.

Understanding Kafka Cluster Expansion

Before proceeding with the expansion, it is important to understand some key components of the Kafka ecosystem. A Kafka cluster consists of multiple brokers, and it is through adding more brokers that scalability is achieved. Topics, where messages are stored, are divided into partitions, which can be spread across multiple brokers for load balancing and redundancy.

Prerequisites

- Existing Kafka cluster running with at least one broker

- Zookeeper ensemble managing the Kafka brokers

- Basic understanding of Kafka architecture and concepts

- Access to Kafka and Zookeeper configuration files

- Proper backup of Kafka data and configurations

Step-by-Step Instructions

Step 1: Provisioning Additional Brokers

First, you need to set up new machines that will act as additional Kafka brokers. Ensure that these machines meet the hardware requirements and have the necessary software installed, including the Kafka binaries.

Example:

# Install Kafka on new broker node

wget http://apache.mirrors.tworzy.net/kafka/2.6.0/kafka_2.13-2.6.0.tgz

tar -xzf kafka_2.13-2.6.0.tgz

Step 2: Configuring New Brokers

The new brokers need to be configured before they can join the cluster. Each broker must have a unique identifier, set in the broker.id property, and must know about the Zookeeper ensemble.

Example:

# Set unique broker ID and Zookeeper connect string

broker.id=3

zookeeper.connect=zoo1:2181,zoo2:2181,zoo3:2181

Step 3: Starting New Brokers

Once configured, start the new brokers and verify they are connected to the cluster properly by checking the logs or by using Kafka’s command-line tools.

Example:

# Start the Kafka broker

./bin/kafka-server-start.sh config/server.properties

Step 4: Reconfiguring Topics for Load Balancing

With additional brokers available, topics must be reconfigured to distribute partitions across the entire cluster. This can be done by either manually assigning partitions to specific brokers or by using Kafka’s reassignment tool to do it automatically.

Example:

# Generate partition reassignment plan

cat << EOF > expand-cluster-reassignment.json

{

"version":1,

"partitions":[

{"topic": "example-topic", "partition": 0, "replicas": [0,2,3]},

{"topic": "example-topic", "partition": 1, "replicas": [1,3,4]}

]

}

EOF

# Execute the reassignment

cat expand-cluster-reassignment.json | bin/kafka-reassign-partitions.sh --zookeeper zoo1:2181 --reassignment-json-file - --execute

Step 5: Monitoring the Reassignment

After triggering the reassignment, monitor its progress regularly using Kafka’s tooling until all data has been moved to the appropriate brokers and the cluster is balanced.

Example:

# Monitor the partition reassignment

bin/kafka-reassign-partitions.sh --zookeeper zoo1:2181 --reassignment-json-file expand-cluster-reassignment.json --verify

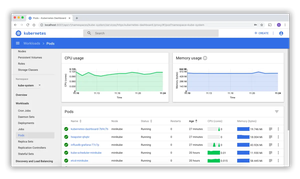

Monitoring Cluster Health

Even after expansion, it’s vital to continue monitoring your Kafka cluster to ensure its health. Utilize built-in commands and metrics to monitor broker status, topic partition distribution, performance, and more.

Example:

# Describe topic to check partition distribution

bin/kafka-topics.sh --describe --topic example-topic --zookeeper zoo1:2181

Advanced: Adding Rack Awareness for Better Fault Tolerance

In more sophisticated setups, configuring rack awareness in your brokers’ configuration can further enhance fault tolerance and availability. This makes Kafka aware of the network topology and enables it to place replicas across different racks or zones.

Example:

# Configuring rack awareness

broker.rack=RackA

default.replication.factor=3

Conclusion

Safely expanding your Kafka cluster is crucial for supporting increased workloads and fault tolerance. By following the steps outlined in this guide and using the examples provided, you can scale your Kafka instance efficiently and ensure it continues to handle the demands of your applications effectively. Remember, after expansion, always monitor your Kafka cluster regularly to detect any issues early and to maintain optimal performance.