Introduction

Apache Kafka is a distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Monitoring the performance of a Kafka cluster is critical to manage its resources efficiently and ensure that the data flows through the system without any bottlenecks or failures.

In this tutorial, we’ll explore how to track key performance metrics in Kafka, focusing on what metrics are important and how to access them with practical examples. We’ll start with the basics and gradually move to more advanced techniques, providing code samples and their expected outputs.

Prerequisites

Before we start, ensure you have a working Kafka cluster, and you’re familiar with Kafka’s core concepts like brokers, producers, consumers, topics, and partitions. Also, make sure you’ve installed the necessary Kafka command-line tools and have access to the metrics reporting systems, such as JMX (Java Management Extensions) or Prometheus with Grafana for visualization.

Basic Kafka Metrics to Monitor

There are several key performance metrics that you should regularly check on your Kafka cluster:

- Broker Metrics: Track the health of Kafka brokers, which are the heart of your Kafka cluster. Important metrics include CPU, memory, disk I/O, and network usage.

- Topic Metrics: Monitor throughput, peak times, and topic offsets to understand the activity around a particular topic.

- Consumer Metrics: Keep an eye on consumer lag, which indicates how far behind consumers are in processing messages compared to the latest offset available in the log.

Accessing Metrics via JMX

JMX provides a standardized way to monitor Java applications, which includes Kafka since it’s built in Java. By default, Kafka brokers expose JMX metrics on port 9999. You can enable JMX by starting your Kafka broker with the following options:

KAFKA_JMX_OPTS="-Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dcom.sun.management.jmxremote.rmi.port=9999" bin/kafka-server-start.sh config/server.properties

Once JMX is enabled, you can use various tools like jconsole, jvisualvm, or jmc to connect to the broker and monitor its metrics.

Reading Basic Broker Metrics

Here’s an example command to list broker metrics using kafka-run-class.sh:

bin/kafka-run-class.sh kafka.tools.JmxTool --object-name kafka.server:type=BrokerTopicMetrics,name=MessagesInPerSec --jmx-url service:jmx:rmi:///jndi/rmi://:9999/jmxrmi

This command will output the rate of messages being received by the broker on all topics. You would see output similar to the following:

{

"mbean": "kafka.server:type=BrokerTopicMetrics,name=MessagesInPerSec",

"attributes": [

"Count",

"MeanRate",

"OneMinuteRate",

"FiveMinuteRate",

"FifteenMinuteRate",

"RateUnit"

]

}

{

"mbean": "kafka.server:type=BrokerTopicMetrics,name=MessagesInPerSec",

"attributes": {

"Count": 9821,

"MeanRate": 20.84318,

"OneMinuteRate": 25.67107,

"FiveMinuteRate": 24.60000,

"FifteenMinuteRate": 23.21305,

"RateUnit": "SECONDS"

}

}

Intermediate Metrics Tracking

As you get familiar with basic metrics, you can dig deeper by examining more granular details such as end-to-end latency, request rate per broker, and replica lag.

Tracking Consumer Group Lag

One of the critical metrics to track for Kafka consumers is the lag, which is the delta between the last message produced and the last message consumed. You can track this using Kafka’s built-in command-line tools:

bin/kafka-consumer-groups.sh --bootstrap-server localhost:9092 --describe --group your_consumer_group

This command will output the current offset and lag for all the topics and partitions that the specified consumer group is consuming:

GROUP TOPIC PARTITION CURRENT-OFFSET LOG-END-OFFSET LAG CONSUMER-ID

your_consumer_group some_topic 0 1053 1100 47 consumer-1-xxx

Calculating lag is essential for ensuring your consumers are keeping up with the producers and not falling behind, which could indicate processing issues or the need for additional consumer instances.

Advanced Metric Collection with Prometheus and Grafana

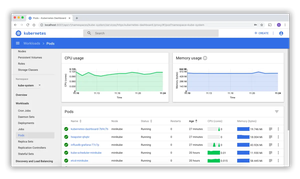

For robust monitoring, you might want to use a dedicated monitoring stack like Prometheus and Grafana. Tools like this give you real-time insight into your cluster’s performance and can provide historical data for trend analysis.

Setting Up Kafka Exporter

Kafka Exporter is a popular open-source tool that parses Kafka’s internal metrics and makes them available for scraping by Prometheus. To get started, download and run Kafka Exporter and point it to your Kafka cluster:

./kafka_exporter --kafka.server=kafka:9092

Once set up, Kafka Exporter will start providing Prometheus formatted metrics.

Integrating Prometheus with Kafka

Prometheus can then be configured to scrape metrics from Kafka Exporter. You would add the following job to the scrape_configs section in your Prometheus configuration:

scrape_configs:

- job_name: 'kafka'

static_configs:

- targets: ['localhost:9308']

And with the Kafka metrics now in Prometheus, you can set up dashboards in Grafana to visualize them.

Creating Granular Alerts and Dashboards

Finally, with your metrics being collected, you can set up detailed dashboards and alerts within Grafana. You’ll create panels to watch broker CPU, disk I/O, and network usage, consumer lag, top-heavy partitions, and more. Alerts can be configured to notify you when thresholds are exceeded, allowing for proactive intervention.

Conclusion

This tutorial provided an overview of what metrics are crucial within a Kafka cluster and how to go from basic command-line tools to full-fledged monitoring stacks. By carefully tracking these Kafka performance metrics, you can ensure that your event streaming systems remain healthy and efficient.