Introduction

With the rise of real-time analytics and the need for robust, scalable messaging systems, Apache Kafka has become a popular choice for many organizations looking to upgrade their messaging infrastructure. In this tutorial, we will cover the essential steps and considerations for migrating from traditional messaging systems to Kafka.

Understanding Kafka

Before diving into the migration process, it’s crucial to understand what Kafka is and how it differs from traditional messaging systems. Kafka is a distributed streaming platform capable of handling high volumes of data, and it is designed for durability, scalability, and fault tolerance.

Core Components:

- Producer: Applications that send messages to Kafka.

- Consumer: Applications that read messages from Kafka.

- Broker: Kafka servers that store data and serve clients.

- Topic: A category or feed name to which records are published.

- Partition: Topics are split into partitions for scalability and parallelism.

Pre-Migration Planning

Successful migration to Kafka involves careful planning. Begin by analyzing your current system in terms of throughput, data retention policies, and fault tolerance. Understanding your system’s requirements will help you configure Kafka appropriately.

Inventory of Existing System

// Code to analyze and list the components of your current system goes here

// Example: Pseudo code to extract endpoints and interfaces

listEndpoints(currentMessagingSystem);

Define Requirements

// Code to define and list the requirements for the Kafka setup

// Example: Pseudo code to set throughput and retention policy

setThroughputRequirement(threshold);

setRetentionPolicy(duration);

Kafka Environment Setup

Once planning is complete, set up your Kafka environment. This typically involves installing Kafka brokers, setting up Zookeeper (for cluster coordination), and defining topics.

Installing Kafka

// Commands to install Kafka

wget http://apache.mirror.digitalpacific.com.au/kafka/2.8.0/kafka_2.13-2.8.0.tgz

tar -xzf kafka_2.13-2.8.0.tgz

cd kafka_2.13-2.8.0

bin/kafka-server-start.sh config/server.properties

Creating Topics

// Command to create Kafka topic

cd kafka_2.13-2.8.0

bin/kafka-topics.sh --create --bootstrap-server localhost:9092 --replication-factor 1 --partitions 3 --topic your-topic

Data Migration

Transferring data from your traditional system to Kafka is a critical phase of the migration. This may involve batch processes or a live migration, depending on the system’s requirements.

Sample Code for Data Transfer:

// Pseudo code to demonstrate data transfer

transferData(currentSystemEndpoint, kafkaBroker);

Integrating Producers and Consumers

After setting up Kafka and migrating the data, you must integrate your producers and consumers. This includes updating the applications to use Kafka’s APIs.

Producer API Example

// Java example of a simple Kafka producer

import org.apache.kafka.clients.producer.*;

Properties props = new Properties();

props.put("bootstrap.servers", "localhost:9092");

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

Producer<String, String> producer = new KafkaProducer<>(props);

producer.send(new ProducerRecord<String, String>("your-topic", "key", "value"));

producer.close();

Consumer API Example

// Java example of a simple Kafka consumer

import org.apache.kafka.clients.consumer.*;

import org.apache.kafka.common.*;

Properties props = new Properties();

props.put("bootstrap.servers", "localhost:9092");

props.put("group.id", "test-consumer-group");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

Consumer<String, String> consumer = new KafkaConsumer<>(props);

consumer.subscribe(Arrays.asList("your-topic"));

while (true) {

ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(100));

for (ConsumerRecord<String, String> record : records) {

System.out.printf("offset = %d, key = %s, value = %s%n", record.offset(), record.key(), record.value());

}

}

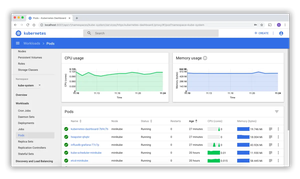

Monitoring and Tuning

Once your applications are integrated with Kafka, it’s essential to monitor the system and tune configurations for optimum performance.

Monitoring Tools

// Listing available Kafka monitoring tools

cat availableMonitoringTools;

// Example: A pseudo command to show available tools

Performance Tuning

// Code snippet for performance tuning parameters

setKafkaTuningParameters(concurrencyLevel, bufferSizes);

// Example: Pseudo code to set tuning parameters

Conclusion

In conclusion, migrating to Kafka can be a complex but rewarding endeavor, offering high throughput, improved data handling, and scalability. By meticulously planning the migration, setting up the new environment, migrating data, integrating applications, and actively monitoring and tuning the system, your transition to Kafka will be well on its way to success.